How Fast is JavaScript Compared to C++? A Practical Look

Explore how fast is JavaScript compared to C++, covering engines, WASM, memory management, benchmarks, and practical guidance for performance-critical tasks.

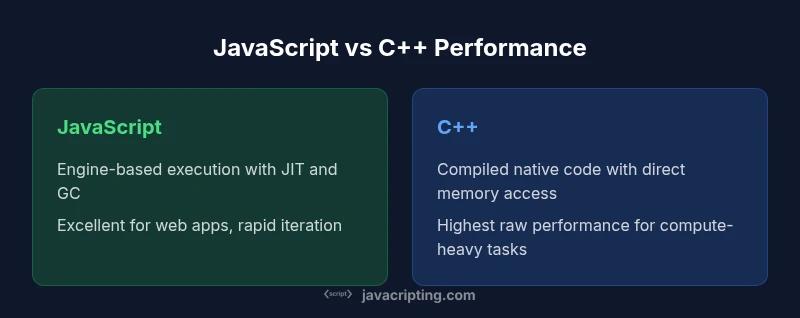

Briefly, C++ typically offers higher raw speed for CPU-bound work, while JavaScript delivers solid performance in UI and I/O-heavy tasks thanks to JIT and engine optimizations. The speed gap depends on workload, environment, and interop strategy (WASM, bindings, or native modules). For many projects, smart design and profiling can make JavaScript fast enough, though specialized compute paths still benefit from native code.

Context and the performance question

The question "how fast is javascript compared to c++" comes up frequently for developers building web apps, games, and data-processing pipelines. Understanding speed in this context isn't just about raw CPU cycles; it's about end-to-end latency, memory pressure, and the cost of cross-language calls. According to JavaScripting, speed is highly workload-dependent and influenced by engine design, optimizations, and how you structure interop. In practice, you should distinguish CPU-bound tasks from I/O-bound paths, and recognize that JavaScript's performance today benefits from Just-In-Time compilation, highly optimized runtimes, and aggressive in-browser optimizations. The rest of this article uses the explicit question as a starting point and unfolds how different ecosystems approach speed, where gaps tend to widen or narrow, and what tools you can use to measure true performance in your own projects.

How modern JS engines optimize performance

Modern JavaScript engines employ a layered approach to speed. Baseline interpretation gives immediate feedback during development, while tiered Just-In-Time (JIT) compilation accelerates hot paths. Inlining and specialization reduce function-call overhead, and escape analysis helps the engine avoid unnecessary allocations. Garbage collection strategies are tuned to minimize pauses on interactive threads, and proactive optimization heuristics guide compilation decisions. For developers, this means the same code can become dramatically faster after warm-up and profiling. JavaScripting analysis shows that understanding engine behavior—when to rely on JIT, how to write cache-friendly code, and how to manage memory—often determines whether JavaScript meets performance goals in real-world apps.

C++: native speed and optimization opportunities

C++ gains speed primarily from its ahead-of-time compilation, direct memory access, and rich optimization opportunities. The absence of a managed runtime and GC removes many latency sources present in JavaScript, enabling tighter control over cache utilization, data locality, and SIMD/vectorization. However, achieving peak performance in C++ requires careful attention to compiler optimizations, memory layout, and parallelization strategies. The code path is often more brittle to changes in compilers and platforms, but the potential speedups for compute-intensive workloads are substantial when hand-tuned. In practice, the choice to implement core logic in C++ hinges on whether raw throughput justifies the added complexity of native development and cross-language interfacing.

Memory management, GC, and caching effects on JS

JavaScript runs in a managed environment with a garbage collector. This model simplifies programming but introduces unpredictable pauses and varying latency under memory pressure. Modern engines mitigate these issues with incremental GC, generational collection, and escape analysis, yet the non-deterministic nature of GC can affect latency-sensitive code. Data locality matters: frequent allocations, object churn, and poor cache alignment can erode throughput. In contrast, C++ uses manual or smart-pointer-based memory management with deterministic deallocation, which can yield predictable performance but requires meticulous design to avoid leaks and fragmentation. Understanding these dynamics helps explain why some tasks that seem CPU-bound in JS still behave well in C++ and vice versa.

Benchmarking: fair comparisons and pitfalls

When comparing speeds, avoid cherry-picked benchmarks or synthetic workloads that favor a language feature. Real-world tests should mimic your target workload, including I/O, memory pressure, and interop costs. JavaScript benchmarks often expose engine-level optimizations in hot paths, while C++ benchmarks emphasize raw computation and memory throughput. Interpreting results requires context: V8, SpiderMonkey, or JavaScriptCore differences can swing results; WASM-based modules can shift the balance for compute-heavy sections. JavaScripting emphasizes profiling end-to-end performance, accounting for compilation warmth, memory pressure, and user-facing latency, rather than chasing isolated microseconds.

To ensure relevance, compare under representative data sizes, realistic concurrency patterns, and consistent tooling across languages.

WASM and the speed story

WebAssembly (WASM) introduces a bridge for near-native compute in the browser and in some runtime environments. For compute-intensive kernels, WASM modules written in C/C++ or Rust can outperform equivalent JS code, especially when you optimize memory access and avoid frequent interface crossing between JS and WASM. However, WASM adds serialization costs, boundary-crossing overhead, and tooling complexity. The performance improvement is workload-dependent and often most noticeable in numerically intensive tasks such as simulations or image processing. WASM does not magically make JavaScript as fast as C++ in all cases, but it can substantially narrow the gap for the right hot path.

Practical guidance: when to use JS vs C++ for performance-critical tasks

Make decisions based on workload characterization. If your task is UI-centric, event handling, or orchestration with a broad ecosystem, JavaScript offers faster delivery and easier maintenance. For compute-heavy kernels, consider a hybrid approach: implement the core in C++ (or WASM), expose a clean API to JavaScript, and profile the end-to-end path. Remember that interop costs, data marshaling, and memory transfers can offset raw speed gains if not designed carefully. The takeaway is pragmatic: optimize the actual bottlenecks, not assumed ones, and use cross-language strategies only where they unlock clear value.

Server-side performance with Node.js and Deno

On the server, Node.js leverages an event-driven, non-blocking I/O model that can deliver high throughput for I/O-bound workloads. Deno emphasizes security and modern toolchains but follows similar performance patterns as Node.js for CPU-bound tasks, unless native modules are used. In both runtimes, performance often hinges on efficient async patterns, worker pools, and native extensions for hot paths. For compute-heavy server tasks, offloading to a native module or WASM can yield meaningful improvements without rearchitecting the entire stack.

Frontend performance patterns and optimization tips

Frontend performance hinges on the entire delivery chain: parsing, compilation, layout, paint, and user interactions. Strategies include minimizing reflows by batching DOM updates, leveraging requestAnimationFrame for animation loops, and avoiding heavy work on the main thread. Data structures matter: choose typed arrays for numeric data, prefer immutable patterns to aid JIT optimizations, and cache frequently accessed values. Avoid pathological memory patterns that trigger GC storms, and adopt code-splitting to reduce initial load. These patterns, when combined with engine-aware coding, can keep JavaScript responsive while still delivering feature-rich experiences.

Profiling tools and measurement techniques

Profiling is essential to understand where speed actually matters. Use browser devtools perf and memory panels to identify long tasks and memory leaks. On the server, Node.js profiles with V8 inspector, flame graphs, and heap dumps illuminate bottlenecks. WASM performance can be analyzed with precise counters and instrumentation in both browser and native environments. Remember to measure under realistic workloads and reproduce user scenarios; diehard microbenchmarks rarely translate into real-world gains.

Case studies and qualitative insights

While numbers vary by project, the qualitative takeaway is consistent: architecture, data locality, and interop discipline drive speed as much as language choice. Projects that balance compute with I/O and present responsive UIs often achieve superior perceived performance with JavaScript, while those needing extreme raw throughput typically rely on native code paths. JavaScripting observations highlight that a well-structured hybrid approach, with careful memory management and efficient bridging, tends to outperform any single-language solution for complex systems.

Decision framework and final takeaways

A structured approach to speed considers workload type, end-to-end latency, and maintenance costs. Start by profiling the dominant bottleneck, then decide whether JS, C++, or a WASM bridge offers the best balance of speed, safety, and developer productivity. The high-level guidance is simple: use C++ for compute-heavy kernels where raw throughput matters, use JavaScript for orchestration, UI, and rapid iteration, and consider WASM when hot paths demand near-native throughput without abandoning a JS-based architecture.

Comparison

| Feature | JavaScript Engine-based | C++ Native |

|---|---|---|

| Raw CPU Throughput | Generally lower than native implementations in CPU-bound loops | Typically higher due to native compilation and direct memory access |

| Memory management impact | Non-deterministic GC pauses can affect latency | Deterministic memory management with explicit control |

| Startup & warm-up | Requires JIT warm-up and profiling to reach peak | Loads immediately as native code; no warm-up boundary |

| Interop/bridging costs | Cross-language calls add overhead (bindings/WASM crossing) | Minimal overhead when using well-designed native interfaces |

| Best use cases | UI, scripting, and web app orchestration | Compute-heavy kernels and system-level tasks |

| Tooling and profiling maturity | Vibrant browser/devtooling + Node.js profilers | Industry-standard C/C++ profiling ecosystems and debuggers |

Benefits

- Excellent integration with web standards and platforms

- Rapid iteration and a rich ecosystem for JS

- Strong tooling for profiling, debugging, and iteration

- Growing use of WASM to bridge performance-critical paths

- Cross-platform capabilities with broad community support

The Bad

- GC pauses can introduce unpredictable latency

- Non-deterministic performance in GC-heavy workloads

- Interop costs can erode gains if not designed carefully

- Browser sandboxing can limit certain optimizations

C++ generally offers higher raw speed for CPU-heavy tasks; JavaScript excels in web-focused workloads with strong tooling and rapid development. WASM bridges can narrow the gap for hot paths.

For compute-heavy workloads, prefer C++ or WASM. For UI-driven and cross-platform apps, JavaScript remains the pragmatic choice, especially with careful profiling and architecture decisions.

Questions & Answers

Why is C++ often faster than JavaScript for CPU-bound tasks?

C++ compiles to native machine code, giving direct access to memory and instruction optimizations. JavaScript runs on a managed runtime with JIT and garbage collection, which introduces overhead and unpredictable latency under memory pressure.

C++ tends to be faster for heavy computations because it compiles directly to machine code and avoids garbage collection, whereas JavaScript runs in a managed environment with JIT and GC overhead that can affect latency during intense workloads.

Does WebAssembly change the performance comparison?

Yes, WASM can bring near-native performance for compute-heavy paths by running code compiled from C/C++ or Rust. The gains depend on memory access patterns and the cost of crossing JS↔WASM boundaries.

WASM can narrow the gap for hot paths by running native-compiled code, but you must account for interop costs and memory layout when evaluating overall performance.

Can JavaScript ever beat C++ in raw speed?

In very specific, well-optimized scenarios, JavaScript can compete on tasks where the bottleneck lies in I/O, orchestration, or where engine optimizations dominate. Raw compute-heavy workloads typically favor C++.

It's rare for JavaScript to beat C++ on raw number-crunching tasks, but for many real-world apps, JS performance is sufficient when combined with good architecture and profiling.

How can I improve JavaScript performance in my project?

Profile real workloads, optimize hot paths, minimize allocations, and avoid frequent DOM touches. Consider typed arrays, stable APIs, and batching to reduce GC pressure and improve cache locality.

Profile with real workloads, optimize hot paths, and minimize allocations. Use typed arrays and batch work to keep JavaScript fast.

Is Node.js faster than the browser for compute tasks?

Node.js offers efficient I/O and concurrency models, but browser engines optimize web apps differently. For compute-heavy tasks, offloading to native modules or WASM often yields better results than pure JavaScript inside the browser.

Node.js does well with I/O, but for heavy computation you may reach the same bottlenecks as the browser—consider native modules or WASM for compute-heavy work.

What are best practices for performance-critical modules?

Isolate heavy work in separate workers or WASM modules, minimize cross-boundary data transfer, and profile end-to-end paths. Use efficient data structures and avoid unnecessary allocations to keep latency predictable.

Put heavy work in workers or WASM, minimize crossing data between JS and native code, and profile the whole path to keep latency predictable.

What to Remember

- Identify workload type: CPU-bound vs I/O-bound

- Use WASM to bridge hot paths when necessary

- Profile with realistic workloads to guide decisions

- Leverage engine optimizations and memory management knowledge

- Adopt a hybrid approach where appropriate for best results